LCD stands for "liquid crystal display," a technology used in the various display mechanisms of many electronic devices. LCM stands for "liquid crystal monitor," and is used to refer specifically to a computer monitor that employs LCD technology.

History

Video of the Day

Liquid crystal technology was originally popularized in the display screens of calculators, clocks, digital watches, and other simple computing devices. The earliest of these display screens were all monochromatic.

Video of the Day

Advanced Uses

LCD technology has advanced such that liquid crystal displays are now capable of a full range of color. Flat-screen televisions, desktop computers, laptop computers, and mobile telephones all use these liquid crystal displays.

Differentiation

A computer monitor that uses LCD technology is called an "LCD monitor" or "LCM." The two terms are used interchangeably. However, "LCD" and "LCM" may not be used interchangeably, because not every screen employing LCD technology is an LCM.

Advantages

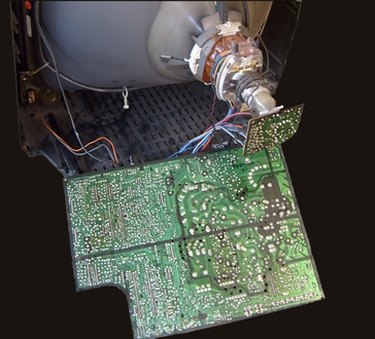

Especially with the development of LCD technology that can show a full range of color, LCD screens are making old cathode ray tube technology obsolete. This is because LCD screens consume less electricity and take up less space.

Similar Technologies

Other technologies often used in the same way as LCD screens are plasma display panels (PDP's) and electroluminescent displays (ELD's). ELD and LCD technology are sometimes used in the same device to provide figures and a backlight to illuminate them.