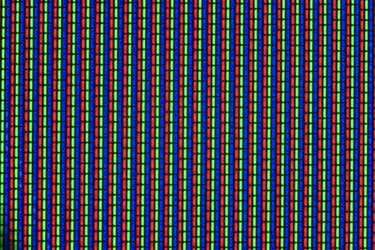

Video Graphics Array (VGA) and megapixel are resolution measurements. Both measurements use pixels (one dot in an image) as a unit of measurement. VGA is a resolution measurement for a computer monitor or a screen while a megapixel is a resolution measurement for digital photography and video. Concerning digital video and photography, megapixels measure the resolution of the image source, and VGA is a measurement of the resolution of the displayed image.

VGA

Video of the Day

A computer monitor running at VGA resolution displays a resolution of 640 pixels wide by 480 pixels high. While computer monitors typically support higher resolutions, VGA is still used as the lowest common denominator resolution that all monitors support. Different computer monitors can support different resolutions and aspect ratios (the size relationship between the width and height of a screen), which can create inconsistencies between programs. However, sharing a common denominator resolution will at least allow a program to support any monitor even if it is not being displayed at an optimal resolution. CRT monitors and screens are better at adjusting to different resolutions compared to LCD (flat screen) monitors, so LCD monitors that are built to run higher resolutions have difficulties running VGA resolution with a clear image. When computers first boot up they will display in VGA resolution before the operating system loads.

Video of the Day

Megapixel

A megapixel is 1 million pixels. Megapixels are commonly used to measure the resolution quality of a digital camera in a quantitative form. More megapixels mean a higher resolution image. Also, megapixels are useful for comparing the resolution of images that do not share a common aspect ratio. A camera's megapixel rating is more important for printing photographs than displaying them on a monitor. A digital photograph that has a 1 megapixel rating may look clear on a computer monitor, but will appear blocky if printed on 4-by-6-inch paper. Digital photographs are typically printed at 300 dots per inch. A formula to determine how many megapixels are needed to print an image based on size is: ((300 * inches wide) * (300 * inches high))/1,000,000.

Comparing VGA and Megapixels

While VGA and megapixels are used to measure different things, the two share equivalent resolutions. For example, a VGA screen has as many pixels as a 0.3 megapixel image, a HDTV running at 720p has as many pixels as 1 megapixel image, and a HDTV running at 1080p resolution has as many pixels as a 2 megapixel image. Video recording on a digital camera does not need as high of a megapixel count than photography that is intended for printing.