Why Phishing Still Works Despite Decades of Security Training

Two decades of security awareness campaigns have taught employees to spot red flags: suspicious senders, misspelled domains, implausible urgency, awkward grammar. Those signals now describe a style of attack that modern phishing has largely abandoned. Meanwhile, Microsoft detected roughly 35 million business email compromise attempts per year between April 2022 and April 2023, about 156,000 every day. Separately, the FBI reported more than 21,000 BEC complaints with adjusted losses exceeding $2.7 billion in a single year.

The question of why phishing still works has a specific answer: attackers stopped building crude fakes and started borrowing the infrastructure their targets already trust.

Modern campaigns originate inside OAuth authentication flows, SharePoint file shares, and browser verification prompts. They arrive from legitimate identity providers, carry the visual signatures of real business tools, and exploit not ignorance but the ordinary cognitive conditions of a workday. Teaching people to look harder at their email addresses none of that. The dominant response has shown limited effectiveness against a threat that has moved well past what it was designed to handle.

This piece explains four interconnected reasons phishing keeps succeeding in well-staffed organizations: attacks that hide inside trusted systems, cognitive mechanics that behave predictably under pressure, training that produces modest results at best, and defenses that remain mostly reactive. Each section ends with what the evidence supports doing instead.

How phishing bypasses security defenses inside OAuth and SharePoint

Video of the Day

The conventional heuristic for spotting phishing, "does this look suspicious?", assumes attackers are building fakes from scratch. They stopped doing that.

Think of it like a counterfeit notice: not forged on cheap paper, but printed on the real organization's letterhead, bearing the real department's seal. The delivery mechanism is genuine. Only the destination is malicious.

Two categories of attack have made this structural shift concrete. The first targets credentials and session tokens. The second uses trusted flows to redirect users to malware or secondary phishing pages. Understanding the difference matters, because the defenses that address each are not the same.

OAuth is the open standard that lets services like Google Workspace or Microsoft Entra ID handle login and redirect users back to authorized applications. It includes a built-in feature that allows identity providers to redirect users to specific landing pages under defined conditions, typically in error scenarios. This month, the Microsoft Security Blog documented multiple threat actors exploiting exactly this mechanism: by crafting authentication requests with deliberately invalid parameters, they trigger an OAuth error and cause Entra ID or Google Workspace to redirect users to attacker-controlled pages. The goal in these campaigns is not credential theft; it's redirection. After the OAuth handoff, some users land directly on phishing pages, while others receive malicious file downloads. Because the initiating URL originates inside a legitimate identity provider, it passes through conventional email and browser phishing filters cleanly. To the recipient, it looks normal because at the surface level, it is.

Adversary-in-the-Middle (AiTM) attacks operate differently. Rather than redirecting users to a second-stage page, the attacker proxies the legitimate login flow, intercepting the session token while the user completes a real authentication. Standard push-based MFA doesn't stop this because the user genuinely signed in; the attacker is watching over the connection and capturing the session cookie that authentication produces.

In January 2026, Microsoft documented an AiTM campaign targeting energy sector organizations that illustrated how far this technique extends. After stealing session cookies, attackers operated from inside a victim's SharePoint environment using legitimate internal identities, sending follow-on phishing to over 600 additional targets. They also created inbox rules to silently delete incoming security alerts, ensuring the victim wouldn't notice. Password resets alone didn't remediate the compromise; the attacker's persistence mechanisms remained intact.

A third technique, ClickFix, completes the picture. It impersonates recognizable browser prompts, including Google reCAPTCHA, Cloudflare Turnstile, and Microsoft Word extension errors, and convinces users to paste commands into the Windows Run dialog or PowerShell terminal themselves. Microsoft observed thousands of enterprise devices affected per month in early 2025, even on endpoints with detection software running. Because the malicious action is performed by the user rather than automated code, many endpoint controls don't intercept it.

When phishing starts inside an OAuth flow from Entra ID, arrives as a SharePoint sharing notification from a colleague's real account, or presents as a CAPTCHA verification screen, the mental model of "look for the suspicious thing" has already failed. There is no suspicious thing to see.

Video of the Day

Why people still fall for phishing: the cognitive mechanics

Knowledge of phishing is nearly universal in professional environments. Click rates are not zero. These two facts aren't a contradiction; they're the expected result of how modern attacks are designed.

Phishing doesn't defeat knowledge. It routes around it. The attack is engineered to reach a person at the moment their judgment is least engaged: under time pressure, acting on behalf of someone with authority, completing what feels like a routine task.

Cognitive biases, predictable patterns of thinking that depart from deliberate analysis, are the mechanism. Attackers don't need to trick people into being irrational. They need to manufacture the conditions that reliably produce fast, unexamined decisions. A peer-reviewed study published in October 2025 annotated 482 real phishing emails and identified ten cognitive biases reliably present: authority bias, urgency effect, negativity bias, zero-risk bias, conformity, and others. The researchers mapped these to a four-stage sequence: attention capture, trust construction, emotional priming, behavioral elicitation. The design moves a target from noticing an email to acting on it before analytical thinking reengages.

The sequence is the point. Urgency captures attention through fast, intuitive processing. Authority cues reduce skepticism. Emotional triggers suppress rational analysis. By the time the user reaches the action step, the psychological work has already been done.

Field evidence confirms this isn't theoretical. RMC's assessment work from late February 2026 found one consistent pattern: employees who correctly identified obvious phishing became measurably more compliant when scenarios were wrapped in IT support context, operational urgency, or access approval workflows. Hesitation dropped most sharply when the scenario involved the IT service desk or a routine access request, exactly the contexts where employees have been conditioned to cooperate quickly.

MFA fatigue follows the same logic. When attackers send repeated authentication push prompts, some employees approve one to stop the interruption, not because they've been deceived about what MFA is, but because compliance feels like the path of least resistance during a busy workday. RMC observed this directly: push notifications were approved simply to make the prompts stop.

The trajectory from here is toward attacks that are even harder to distinguish from legitimate interactions. SecurityWeek's expert panel from January 2026 describes AI tools already capable of optimizing message framing in real time based on target response. One researcher characterizes this as a shift from static templates toward "sticky personas," dialogue agents that build rapport before redirecting behavior.

Phishing succeeds not because employees lack knowledge, but because attacks are engineered for the conditions under which human decisions are most automatic. That's a design problem. Training employees to recognize surface signals doesn't reach it.

Why the standard response falls short

Annual phishing awareness training is the dominant organizational countermeasure. The evidence on its effectiveness is not encouraging, though the picture is more complicated than a simple verdict of failure.

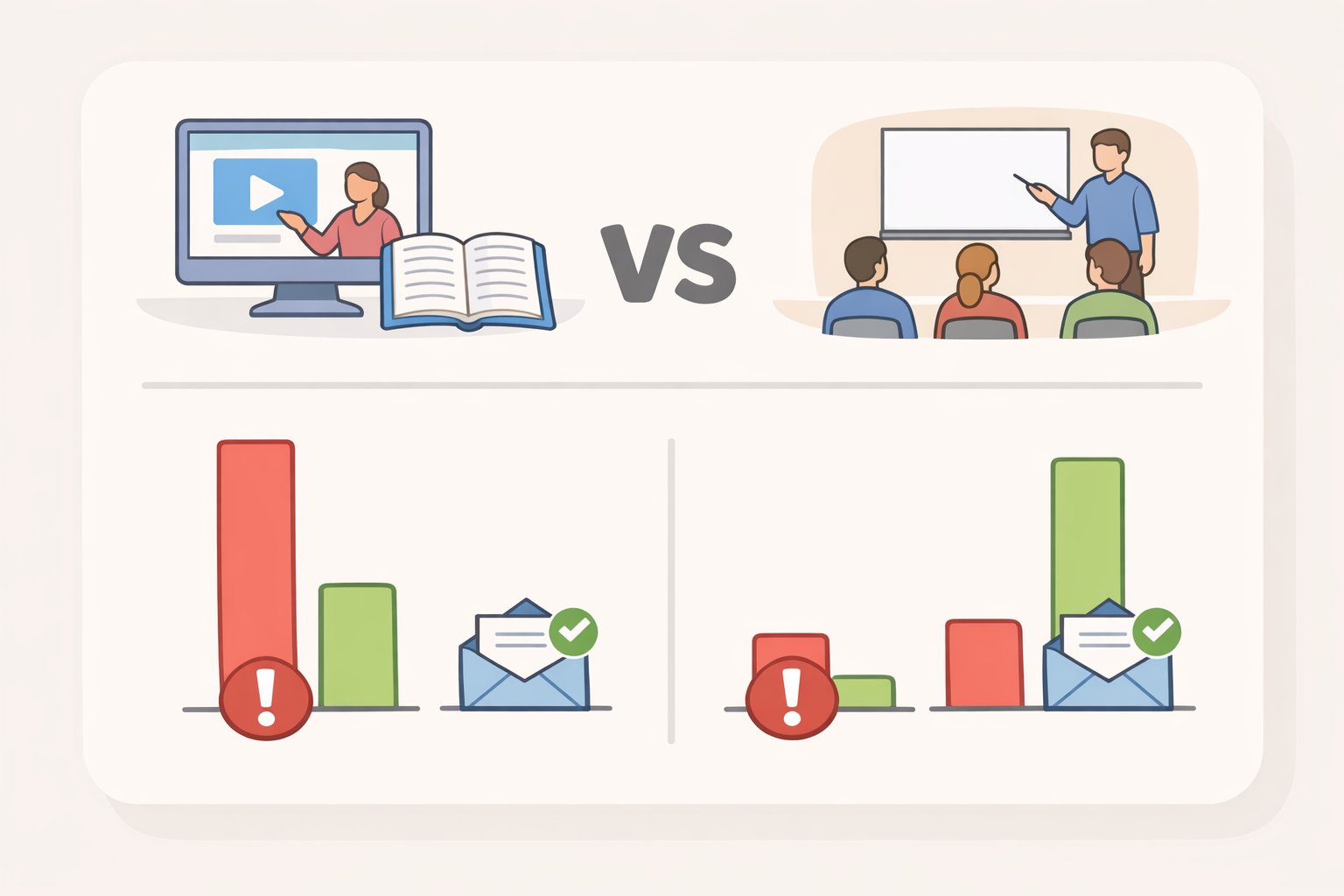

The clearest data comes from a large-scale preprint study of 12,511 employees at a US fintech firm, with data collected from December 2024 through April 2025. Researchers tested both lecture-based and interactive training formats. Neither produced a statistically significant reduction in click rates (p=0.450) or reporting rates (p=0.417). Effect sizes were below 0.01 across all conditions, a level the authors describe as indicating minimal practical value for organizational security investment. This is a preprint and hasn't completed peer review, but the scale and methodology are notable.

A study of 19,789 UCSD Health employees, covered by Dark Reading in June 2025, found similar results with a harder-to-ignore wrinkle: employees who completed multiple static training sessions became 18.5% more likely to click phishing links, apparently because completing training produced unwarranted confidence. Even the best-performing, most-trained employees were still fooled by convincing lures more than 15% of the time.

Cybersecurity Dive's analysis from October 2025, drawing on multiple academic reviews including a 2024 Leiden University meta-analysis of 69 studies, concluded there is "no evidence that annual security awareness training correlates with reduced phishing failures." The Leiden researchers were precise about why: training reliably changes stated attitudes and knowledge scores. It rarely changes actual behavior under realistic conditions. Answering a quiz correctly is not the same as pausing to scrutinize an access request from the IT service desk at 4:45 on a Friday.

There is one partial exception worth acknowledging. The UCSD study found participants in genuinely interactive training sessions showed a 19% reduction in phishing link clicks. That's a real finding. But in the same study, more than half of all training sessions ended within 10 seconds of starting, and only 24% of participants completed their assigned course. Interactive training requires engagement; getting a large organization to engage is a different problem than building effective content.

The training model was designed for a prior generation of attacks: crude lures that rewarded recognition of suspicious signals. It was never built to prepare people for OAuth redirects from Entra ID or phishing messages delivered from a compromised colleague's SharePoint account. The gap isn't effort or investment. It's fit.

What actually reduces risk: structural defenses

The most effective defenses don't depend on a human correctly identifying an attack under time pressure. They make the credential unreplayable, limit what access a stolen session can reach, and reduce the available attack surface before any phishing attempt begins.

Replace replayable authentication

Phishing-resistant MFA, built on FIDO2, uses hardware-bound cryptographic keys rather than passwords, push notifications, or SMS codes. The credential is mathematically bound to the legitimate domain. A phishing page, even a perfect replica, cannot capture or replay it. This is categorically different from standard push-based MFA, which AiTM attacks bypass by intercepting the session token after successful authentication.

The deployment question is real but tractable. CISA's case study of the USDA, published in November 2024, documented hardware-based FIDO authentication deployed to approximately 40,000 users who previously relied on username and password combinations, extending protection across more than 600 applications through single sign-on, including for workers who couldn't use traditional PIV cards. Microsoft's analysis of the January 2026 SharePoint campaign explicitly names FIDO2 security keys as the control that would have prevented the initial credential compromise.

Limit post-compromise impact

When prevention fails, and some percentage of the time it will, the question becomes how much damage can be done with what was stolen.

Microsoft's SharePoint case established that password resets are insufficient after an AiTM compromise. Organizations also need to revoke active session cookies and remove attacker-created inbox rules that suppress security alerts. These are post-access controls: they address what an attacker can do after getting in, not just how they got in.

For OAuth-based attacks, Microsoft's March 2026 advisory recommends limiting which users can grant application consent, regularly auditing and removing overprivileged or unused OAuth app registrations, and treating third-party OAuth applications as a persistent attack surface requiring ongoing governance. OAuth app permissions have the same persistence problem as session cookies: once granted, they remain until actively reviewed and revoked.

Remove the attack surface through system hardening

For ClickFix-style attacks that rely on users executing commands from browser prompts, Microsoft recommends disabling the Windows Run dialog via Group Policy where it isn't operationally required, restricting PowerShell execution paths, and configuring Windows Terminal to warn users when pasted content contains multiple commands. These controls remove the execution opportunity rather than depending on users to recognize the manipulation.

For high-risk decisions involving identity verification, SecurityWeek's expert panel converges on a process design principle: stop authenticating identity through channels that can be spoofed. Voice, video, and push notifications can all be faked or intercepted. Building verification workflows through pre-established alternate channels, such as executive passcodes or platform-specific verification codes, removes the attack surface from the decision entirely rather than asking employees to detect increasingly convincing fakes in the moment.

A different frame for the problem

The reason phishing still works is not that employees have failed to learn. It's that the threat evolved faster than the response.

Attacks now originate inside legitimate platforms, exploit predictable cognitive patterns rather than ignorance, and are produced at scale by commercially available toolkits. The phishing-as-a-service platform SheByte offers AI-generated templates for around $200 per month, SecurityWeek reported in January 2026. Infrastructure can be burned and replaced within hours, Security Boulevard noted that same month. The fintech preprint's finding that lure difficulty alone nearly doubles click rates, from 7% on easy lures to 15% on harder ones, implies that as AI makes high-difficulty lures cheaper to produce at scale, some clicks are simply going to happen regardless of training.

The decision framework this points toward is practical:

- Stop relying on visual suspicion cues. OAuth redirect URLs from Entra ID, SharePoint sharing notifications, and CAPTCHA prompts look legitimate because they are legitimate at the surface. Detection based on "does this look suspicious" fails when the attack is designed to look like work.

- Replace replayable credentials where possible. Phishing-resistant authentication (FIDO2/passkeys) is the one technical control with strong evidence for breaking the credential-theft step. The USDA deployment demonstrates it can be scaled, including for users who can't use traditional smart cards.

- Design for compromise, not just prevention. Revoking session cookies, auditing inbox rules, and governing OAuth app permissions limit post-compromise damage independent of whether the initial click was prevented.

- Redesign high-risk processes to be spoof-proof. Wire transfers, access grants, and executive requests should be verified through channels that cannot be faked by voice or video alone, not because employees are careless, but because the tools to fake those channels are now commercially available.

The documented trajectory, AI-generated payloads in phishing campaigns, ClickFix attacks reaching thousands of devices daily, OAuth redirects bypassing email filters, describes a threat that has already moved well past what annual training was designed to handle. The organizations better positioned for what comes next are the ones that have shifted accountability from individual vigilance to system design.